ARTILACS Research Training Group

Since early 2025, the HFBK Hamburg has been part of the inter-university Artistic Intelligence in Latent Creative Spaces (ARTILACS) research training group. ARTILACS aims to investigate the extent to which a hybrid combination of AI-supported, latent spaces and traditional knowledge spaces of artistic practice opens up opportunities for new forms of creativity and insight. In addition to the HFBK Hamburg, the University of Music and Theater (HfMT), the University of Applied Sciences (HAW), and Hafen City University (HCU) are also involved in the program.

Funded project + –

Simone Niquille: »Parametric Truth: Synthetic Training Data and the Slippery Slope of Ground Truth«

Simone C. Niquille investigates how synthetic training data—particularly 3D models in computer vision—shape the understanding of “truth” in AI systems. Her research analyzes the blurring of reality and simulation in the domestic image context and reflects on the aesthetic, political, and ethical dimensions of AI-generated images. »Parametric Truth« critically examines how AI constructs reality instead of representing it—a central concern of ARTILACS. Niquille’s work questions cultural assumptions in AI systems and develops tools to analyze software beyond its functional use.

Born in Switzerland. BFA in Graphic Design from the Rhode Island School of Design and MA in Design from the Sandberg Institute Amsterdam. Since 2025, PhD researcher in the ARTILACS program at the HFBK Hamburg (Digital Graphics class). Founder of the Amsterdam-based studio technoflesh .Works as a designer and researcher working at the intersection of computer vision , digital graphics, critical software studies , and speculative design. Lecturer at the Design Academy Eindhoven and the Amsterdam Academy of Architecture.

Marco De Mutiis introduces the work of Simone C Niquille + –

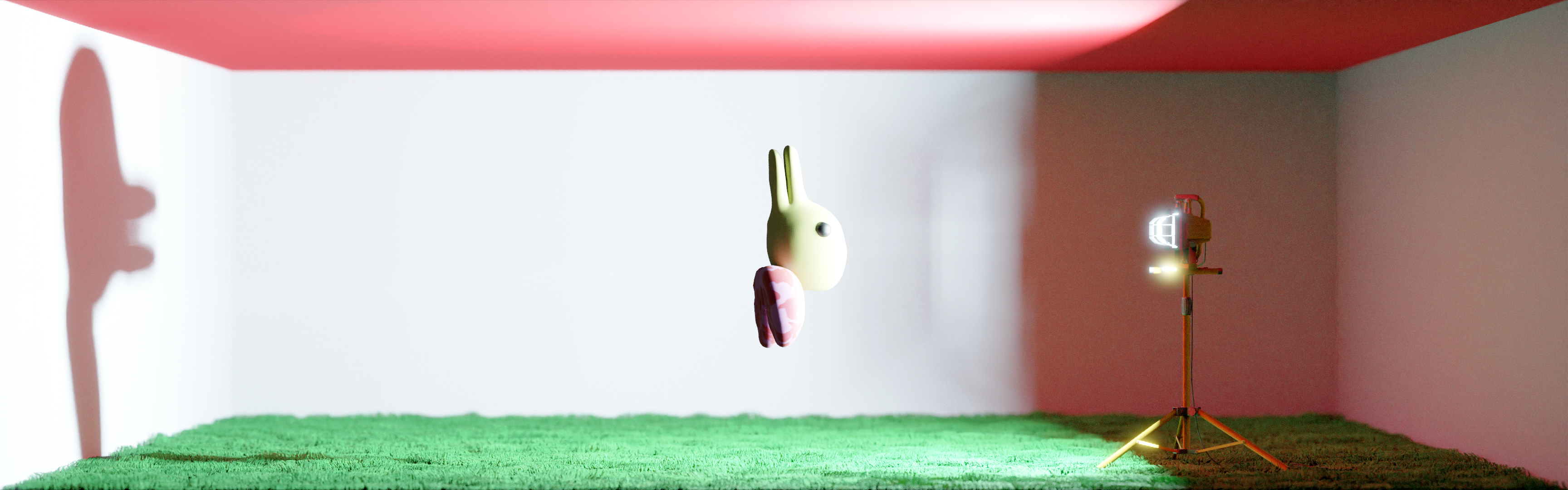

The worlds of Simone C Niquille unfold with a deceiving, almost childlike innocence. Their protagonists, seemingly harmless, disarm with a cuteness that conceals something more unsettling beneath the surface. A naïve household cleaning robot learning to see, a duckrabbit on a journey of self-discovery, and an autonomous chair attempting to navigate a domestic space are among the figures that inhabit Niquille’s environments. Through uneasy movements and childlike voices, these characters question how they perceive and interpret the world, probing the very structures that shape their design. “Objects in the mirror are closer than they appear. But where do these objects appear?” wonders a bewildered Computer Generated Imagery (CGI) rendition of the duckrabbit, a character first made famous by Ludwig Wittgenstein’s work on perception. The domestic floor-cleaning robot is surprised by what appears inside the home, and hesitates before a simple object, unsure if it is a vase, a bowl, or a cup.

Yet slowly, out of this cuteness and tender helplessness, unease begins to surface. As we are drawn into computational ways of seeing—experiencing the world in first person through machinic eyes that govern contemporary systems—we begin to grasp the consequences of living alongside computer vision and imaging technology. In duckrabbit.tv (2023), behind the playful question “Am I rabbit or am I duck?” lies the erasure of ambiguous entities, liminal spaces and queer identities as incomputable states from the perspectives of machines. As these imaging systems often act as substitutes for what’s real, machine vision does not allow the duckrabbit to ever be both, only either duck or rabbit. This new “real” is precisely what Simone C Niquille so skillfully is able to depict, opening up the black boxes to reveal the invisible processes of computational photography, the politics of datasets and digital renders.

The childlike narrator’s voice, that accompanies many of the artist’s characters, immediately draws us back to the process of learning, to the act of being taught the order of things, and to the ways this process underpins contemporary devices and vision technologies. Who decides what is a face, what is a body, that a box is a chest but not a mailbox, or that a chair must have four legs rather than being any object we can sit on? When a cleaning robot tries to understand what an object is, the issue is not merely semantic. Behind each definition lie subjective and sensorial experiences, cultural contexts, and relational meaning-making. The hidden processes and labor behind machine vision systems suddenly comes into view: the assembling of vast datasets, with millions of annotated photorealistic images and 3D scans captured from domestic spaces. This data, moving from photographs annotated by a global workforce of distributed “cloud workers” (in the case of datasets like ImageNet) to automated synthetic datasets (which is the case for SceneNet RGB-D), produces the abstracted references and raw material on which machine learning systems base their understanding of the world.

These digital imaging technologies are the product of different economies and political forces, and have been developed by both scientific disciplines and entertainment companies. In fact, it’s precisely the intersections between these approaches that render their ubiquitous adoptions dangerous and important to investigate. Writing about the 2012 shooting of Trayvon Martin by George Zimmerman, Simone C Niquille has shown how 3D animated reconstruction of the crime used the same motion capture technology employed in the entertainment industry to surpass the human body and inhabit any fantastical creature. Similarly, the political implications embedded within datasets used for face recognition and object detection, as well as the constant attempts of developers to categorize the world, are a consistent focus in the artist’s research. In video works such as Homeschool (2019) and Sorting Song (2021), Niquille takes us to the boundaries of categorization, revealing the impossibility of drawing objective lines between one definition and the next. We are dropped into these ambiguous spaces, where friction emerges between models of reality and reality itself. Here, we witness the glitches and failures of the computational eyes entrusted to govern society. And when the clueless children’s voices, guessing at what lies before them, are revealed as stand-ins for systems tied to governance, security, surveillance, and justice, the playground quickly turns nightmarish.

Niquille’s video work Elephant Juice (2020) stages a person nervously preparing for an automated job interview in front of a mirror, training their facial muscles to be legible to a webcam. Moving the focus from objects to human emotions, this work explores algorithmic evaluation of qualities such as trustworthiness, compatibility, or diligence in the workplace. Such systems translate facial expressions into emotional categories by relying on taxonomies like the Facial Action Coding System (FACS), a system developed in the 1970s, which divides the human face into discrete zones of movement that are assigned specific emotions. Once again, this technology is widely adopted in fields as diverse as computer animation, advertising metrics, police profiling, and human resources, embodying the enduring belief that emotions can be quantified and reduced to measurable signs. Elephant Juice challenges this assumption by exposing the fragility of such systems through their glitches—beginning with the work’s very title. In lip-syncing technologies, the phrase “I love you” is often mistranscribed as “elephant juice.” This absurd mistranslation, at once meaningless and strangely poetic, becomes a reminder of the impossibility of fully interpreting a body. It echoes philosopher Thomas Nagel’s seminal essay “What Is It Like To Be a Bat?”, where the limits of empathy and perception are confronted. A bat, in fact, is also the unlikely narrator of Elephant Juice, a potent metaphor for AI systems programmed to see without eyes, forever fumbling at the edges of understanding.

The final turn, after inhabiting the worlds of Simone C Niquille and stepping back into our own, is the unsettling realization that these are not whimsical fairy tales populated by cute robots and charming creatures, but the actors of our everyday reality. As the world is increasingly “seen” and understood through these processes, machinic perception becomes not just a way of looking but the dominant force shaping reality itself. This compels us not only to join the duckrabbit and Niquille’s characters in questioning which places we are permitted to inhabit, but also to resist the erasure of ambiguity—embracing glitches and moving through errors as sites of resistance and refusal. Tender and cute though it may be, a resistance both necessary and unstoppable.

Marco De Mutiis is Digital Curator at Fotomuseum Winterthur in Switzerland, where he leads the museum research on algorithmic and networked images. He develops and co-curates different digital projects and online platforms expanding the role and the space of the museum. He co-curated the exhibition The Lure of the Image (with Doris Gassert and Alessandra Nappo), and the exhibition How to Win at Photography – Image-making as Play (with Matteo Bittanti). He has written, edited and contributed to several publications, including the recent volumes Screen Images – In-Game Photography, Screenshot, Screencast (co-edited with Winfried Gerling and Sebastian Möring) and The Photographer’s Guide to Los Santos (co-authored with Matteo Bittanti).